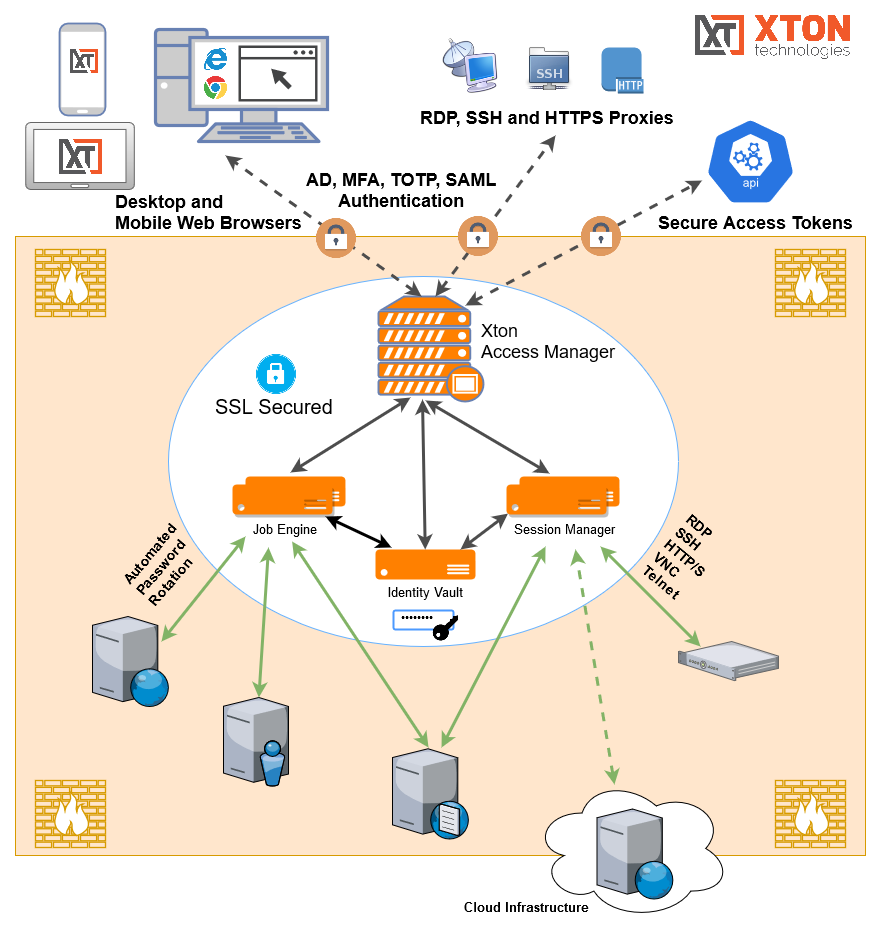

Understanding the Xton Access Manager Architecture

Xton Access Manager server is installed on a single or multiple physical or virtual computers.

We call each computer a node.

Single node setup is very easy and quick.

However, administrators might decide to use multiple nodes for the server installation to increase performance, to improve availability (in case when one of the nodes malfunctions) or to improve security (to separate master password and encrypted data).

Xton Access Manager server is constructed from several types of blocks.

Administrators can install all these blocks on one single node (remember, a node is just a physical computer or a virtual machine).

This is what we recommend for trial or a light system use to simplify the installation process. We call such virtual or physical computer – a node.

Note that each node might contain multiple blocks or different type.

Alternatively, an administrator may decide to install some of the blocks on one node and some other blocks on the other nodes to increase overall system performance.

Moreover, an administrator can install more blocks of the same type on different nodes to increase system performance, to improve system availability or to improve internal system security.

Xton Access Manager's server blocks

So what are these blocks that together construct an Xton Access Manager server?

- Application GUI or WEB Front End is the block that interacts with users using WEB GUI or with scripts using API. The server may contain multiple Application GUI blocks installed on different nodes to make the system serve users faster. Each Application GUI block might serve many users or script requests.

- However, as more users access the system more Application GUI blocks might be installed. When the server uses multiple Application GUI nodes, the administrators should install a Load Balancer block (on one of the nodes or on a separate node) to balance the use of WEB Front Ends.

- Job Engine is the block that executes background processes like password reset or discovery. The server might contain multiple Job Engine blocks installed on different nodes. Each Job Engine block can handle many password resets or discovery queries.

- However, adding more Job Engine nodes increases the speed of these tasks because more of them will be executed at the same time. The system includes flexible configuration of Job Engines on each node. This allows for some processes to be disabled on some nodes and adding more threads (parallel tasks) for certain nodes.

- Session Manager is the Jump Server gateway. This block displays remote computer screens in the users browsers. The server might contain multiple Session Managers. Each session manager can handle multiple simultaneous sessions.

- However, when the server has to support too many sessions, an administrator can add more Session Manager nodes. Moreover, Session Manager node might be installed at a strategic location that can access certain computers on the network. The server includes flexible configuration that allows selection of session manager depending of the location of the destination computer.

- Directory Service is a block that contains local system users and also a master password. The server might contain only one Directory Service.

- However, an administrator may decide to host this block on a separate node to separate physical master password location from the encrypted data in the database.

- RDBMS is a block that stores all internal system data (with the exception of local users and master password stored in the Directory Service). The server may contain only one RDBMS node. The server is shipped with the internal database that should be installed on one of the nodes with either Application GUI or Job Engine.

- However, an administrator might decide to use one of several external supported RDBMS. We do not count RDBMS node in our licensing model.

- Federated Sign-In is a block that performs user authentication. The server might include only one Federated Sign-In block.

Typical deployment architecture scenarios

To illustrate the use of the nodes we will describe several typical deployment architecture scenarios:

- Trial. All blocks could be installed on a single node using default installation options.

- Light Use. All system components could be installed on a single node like during the Trial but exposed to the outside world using Load Balancer with secured SSL HTTPS connection through the standard https port 443. Clients will need to install their own certificate for the known host name. The Federated Sign-In service might or might not be installed in this case depending on whether basic authentication through the secure channel could be enough for the system operations. This is our primary recommendation for the initial or light use in a typical SMB organization.

- Enterprise Database. While the internal RDBMS shipped with the system is a reliable database, it is possible to connect all components (Application GUI and Job Engines) to external database scheme supplied by the user. The system will create and populate the data tables automatically during the first run or data import. The database could be a Derby database installed as a part of different computer node or it could be any other certified RDBMS supplied by the user.

- High Availability scenario is achieved when Application GUI, Job Engine and Session Manager components deployed to two different nodes connected to the single RDBMS accessed through the single Load Balancer and using a single Directory Service possibly offloaded to a third node. This three-node setup is a minimal configuration for highly available, moderate performance deployment with the inclusion of the improved security option.

- Many Users scenario addresses the situation of many users accessing the system Application GUI simultaneously mostly for the data managed by the system without accessing many remote computers. In this case Application GUI could be deployed to several nodes accessed through the load balancer connected to the same RDBMS and Directory Service to improve system reaction time.

- Many Sessions scenario addresses the case of many simultaneous sessions to remote computers established by system users at the same time. The sessions might include multiple users attached to the same computer (session sharing) or users accessing different computers. In this situation Session Manager could be installed on multiple different nodes to reduce the load to each individual Session Manager. Application GUI will load balance Session Manager selected to access specific computer group based on the number of sessions currently opened on an Session Manager. In addition to that some Session Manager components could be installed at the strategic network locations to provide (better) access to certain network resources. IT creates Session Manager proximity groups that define groups of Session Manager services that access certain computers selected by IP addresses or by name pattern.

- Many Jobs scenario covers the case when there are many parallel password resets, script executions, notifications, or discovery jobs running in the background. In this case the Job Engine component could be installed on multiple computers connected through the single RDBMS to reduce the load on any particular node. The Job Engine component could be configured to increase or decrease the thread load for every particular process or to disable certain processes on some nodes completely. For example, some computers might only handle password reset jobs while other computers might only handle notifications. In addition to that some Job Engine components could be installed at the strategic network locations to provide (better) access to certain network resources.

- Combination scenario allows use of any previous deployment configuration. The complete system application farm might contain multiple nodes distributed across multiple network locations accessed through a single HTTPS entry point.

Remote or Isolated XTAM Nodes

The Concept and Architecture

You install an XTAM node in an isolated network to provide access to assets in this network and to execute jobs such as password resets on the assets in the isolated network.

One node in the isolated network will serve all assets it can access within this network. In this scenario, users gain access to all assets from this isolated network through the main XTAM node, while the isolated node works transparently behind the scenes to serve these assets.

This is not a high availability setup because both nodes have access to different assets (one in the main network and the other one in the isolated network) so you need both of them to operate.

When the main node is down, no access is possible to anything.

The following is a description of the architecture to design an isolated Session Manager (for sessions) and isolated Job Engine (for task execution like password resets) deployment.

This is a conceptual description to illustrate the architecture to help with design decisions. For details and configuration options, please read the appropriate guide linked below.

The isolated node should be installed using its internal database and then configured to serve as an isolated node.

It does not need to connect to the same database the main XTAM node is connected as this deployment scenario assumes that the back end database of the main XTAM node is not accessible by the isolated node from inside of its network.

Session Manager

To provide remote access to assets in the isolated network, the main node needs to connect to the Session Manager in the isolated network using the proprietary XTAM protocol, which it does using port 4822.

This port should be opened in the isolated network firewall to the XTAM isolated node for the main node to connect.

The Session Manager traffic between the main node and the isolated node is secured by the certificates exchanged between the nodes.

To configure this you will need to bring this certificate from the main XTAM node to this isolated node during configuration.

Lastly, the main node should have a configuration in the Administration > Settings > Proximity Groups screen instructing the main node to route traffic for certain assets to the isolated node. There are several criteria you can choose from:

- route all traffic to certain IP addresses to the isolated node and all other assets will be served by the main node

- route all traffic to certain computer names (by DNS name masks) to the isolated node and all other assets will be served by the main node

- route all traffic for all assets locates in certain XTAM Vault (folder) to the isolated node and all other assets will be served by the main node

Use Case #2, Network Isolation, in the article provides additional details here.

Job Engine

To make the isolated node for task executions like password reset jobs on the assets inside the isolated network, you need to create a Service user in the main node using the Administration > Local Users screen.

After that, you need to designate this user account as a Service using Administration > Global Roles screen. Lastly, you need to grant this user permissions (Owner with Execute rights) for the assets the isolated node should serve.

This permission could be granted for the Vault, Folder or for individual Records.

The isolated node, when configured, will execute jobs only for the assets with this user in permission sets.

The main XTAM node will not execute jobs for the assets designated for the isolated node.

After the service user is created, connect the isolated node to the main node by using the XTConnect command, providing the https URL of the main node and credentials of the service user created earlier.

After this command, the isolated node will communicate with the main node using HTTPS traffic from inside of the isolated network.

The main node should be available for the isolated node to connect using the regular URL (port 443 should be opened in the main node firewall, you can check this by browsing main node from the isolated node).

When properly configured, the isolated node will poll the main node for the jobs to execute for the assets designated for the isolated node by means of the permissions granted for the service account, execute those jobs for the local assets and send results back to the main XTAM node.

Use Case #2, Remote Job Engines operating in isolated networks, in the article provides additional details here.

Additional Nodes

More isolated network nodes could be added in a similar fashion by using additional proximity groups for session managers and additional service accounts to designate assets for task execution by these remote nodes.

One of the design decisions in content organization is how to group assets for the isolated nodes to simplify management.

The simplest solution is to put all assets related to the isolated network into a corresponding XTAM Vault to set up Session Manager proximity group to this vault and to grant permissions to the service account on the vault level.

There are other solutions for this designation too.